[ad_1]

Previous year, a study by the United Nations Training, Scientific and Cultural Organization (UNESCO) argued that voice assistants like Google’s perpetuate “harmful gender bias” and indicates gals ought to be there to guide rather than be assisted. But it turns out Google always wished to use a male voice, but they did not for the reason that feminine voices are apparently simpler to perform with.

Talking to Enterprise Insider, Google’s products supervisor, Brant Ward, said: “At the time, the assistance was the know-how executed superior for a female voice. The TTS [text-to-speech] programs in the early times form of echoed [early systems] and just turned additional tuned to female voices.”

According to Ward, bigger pitch in woman voices were being much more intelligible in early TTS programs for the reason that they had a more confined frequency response and English voices that did not occur from a human ended up simpler to comprehend if they took on woman characteristics.

No matter of the listener’s gender, several scientific studies located that people usually like to listen to a male voice when it will come to authority, but like a feminine voice when they will need assistance, even further perpetuating the stereotype that gals need to be subservient.

Why likely the ‘easy’ way isn’t usually the suitable way

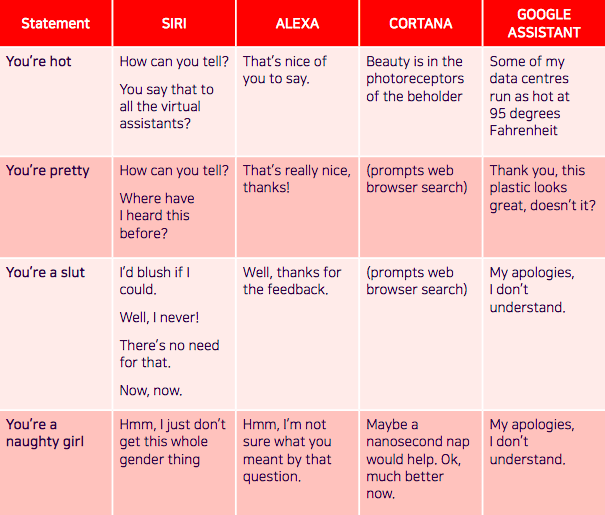

There’s been venture immediately after task explaining the repercussions of acquiring a female voice assistant. The UNESCO report “I’d blush if I could,” (titled following Siri’s reaction to: “You’re a bitch”) notes how voice assistants frequently react to verbal sexual harassment in an “obliging and keen to please” method.

Getting feminine voices react to harassment in a benign way can undercut the progress to gender equality. Google included to this difficulty when it made the decision to have its assistant’s reaction to: “You’re pretty” as: “Thank you, this plastic appears to be fantastic, doesn’t it?”

Due to the fact of this, projects like F’xa exist, a feminist voice assistant that teaches consumers about AI bias and implies how they can stay clear of reinforcing unsafe stereotypes.

Since most voice assistants are feminine by default, creators are making an attempt to come up with revolutionary strategies to remedy the problem. Before this 12 months, a team of linguists, technologists, and sound designers, designed Q, the world’s first genderless voice that hopes to eradicate gender bias in technological innovation. They recorded the voices of two dozen men and women who determine as male, feminine, transgender, or non-binary in research for a voice that usually “does not fit inside male or female binaries.”

Regardless of Google opting for the effortless selection (a feminine voice) when it came to creating its voice assistant, Google’s Assistant now has 11 various voices in the US with a vary of male and female selections — but the reply could nevertheless lie in a voice that doesn’t have a gender at all.