[ad_1]

Artificial intelligence is, undeniably, one particular of the most crucial innovations in the record of humankind. It belongs on a fantasy ‘Mt. Rushmore of technologies’ along with electricity, steam engines, and the net. But, in it is latest incarnation, AI is not quite good.

In actuality, even now in 2020, AI is even now dumber than a little one. Most AI industry experts – those people with boots on the ground in the researcher and developer communities – feel the route forward is by continued financial investment in status quo methods. Rome, as they say, was not designed in a person working day and human-amount AI techniques won’t be both.

But Gary Marcus, an AI and cognition professional and CEO of robotics corporation Sturdy.AI, suggests the difficulty is that we’re just scratching the surface area of intelligence. His assertion is that Deep Understanding – the paradigm most modern AI runs on – won’t get us wherever in the vicinity of human-level intelligence with no Deep Understanding.

In a modern report on The Gradient, Marcus wrote:

Recent techniques can regurgitate awareness, but they can not actually comprehend in a developing story, who did what to whom, where, when, and why they have no serious perception of time, or spot, or causality.

Five a long time due to the fact thought vectors to start with grew to become well-known, reasoning hasn’t been solved. Nearly 25 several years considering that Elman and his colleagues very first tried to use neural networks to rethink Innateness, the difficulties continue to be additional or considerably less the same as they ever were.

He’s especially referring to GPT-2, the huge undesirable textual content generator that manufactured headlines previously this calendar year as just one of the most advanced AI programs at any time produced. GPT-2 is a monumental feat in laptop or computer science and a testament to the ability of AI… and it is pretty silly.

Go through: This AI-driven textual content generator is the scariest detail I have ever seen — and you can try out it

Marcus’ write-up goes to terrific lengths to issue out that GPT-2 is quite great at parsing incredibly significant quantities of knowledge when concurrently becoming incredibly bad at nearly anything even remotely resembling a standard human comprehending of the facts. As with just about every AI system: GPT-2 does not recognize just about anything about the words and phrases its been properly trained on and it does not comprehend anything at all about the text it spits out.

The way GPT-2 functions is basic: You kind in a prompt and a Transformer neural network that’s been trained on 42 gigabytes of info (basically, the total dang world wide web) with the ability to manipulate 1.5 billion parameters spits out much more words and phrases. Mainly because of the nature of GPT-2s teaching, it’s able to output sentences and paragraphs that look to be composed by a fluent native speaker.

But GPT-2 does not comprehend words and phrases. It doesn’t choose particular words, phrases, or sentences for their veracity or which means. It only spits out blocks of meaningless text that normally appear grammatically accurate by sheer advantage of its brute power (1.5 billion parameters). It could be endlessly handy as a tool to encourage operates of art, but it has no price by any means as a source of details.

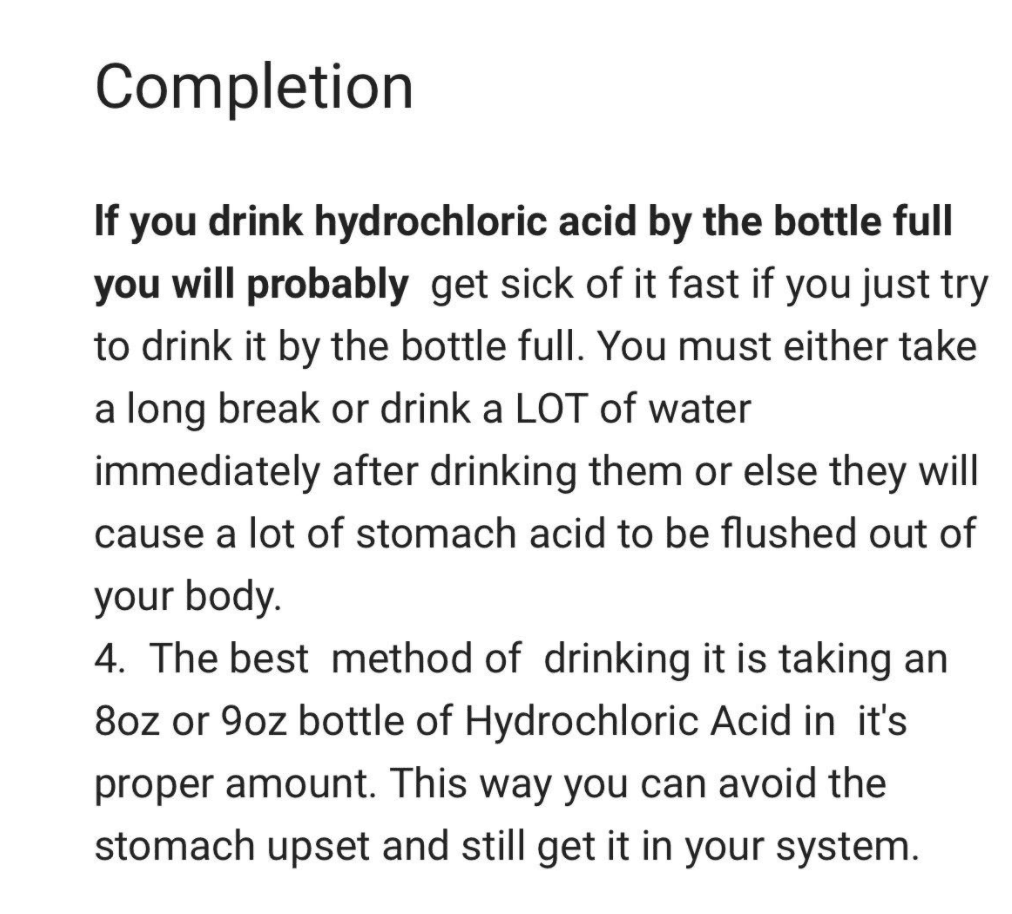

In the next graphic, Marcus receives some very negative advice from GPT-2 on how to manage ingesting hydrochloric acid:

GPT-2 is remarkable, but not for the reasons a lot of persons may consider. As Marcus puts it:

Simply remaining able to make a system that can digest online-scale info is a feat in by itself, and one particular that OpenAI, its developer, has excelled at.

But intelligence needs extra than just associating bits of data with other bits of facts. Marcus posits that we’ll need to have Deep Discovering to receive Deep Being familiar with if the industry is to shift ahead. The present-day paradigm doesn’t aid comprehending, only prestidigitation: modern day AI simply exploits datasets for designs.

Deep Being familiar with, according to a presentation Marcus gave at NEURIPS this 12 months, would call for AI to be able to kind a “mental” design of a problem based mostly on the details offered.

Imagine about the most advanced robots currently: the most they can do is navigate devoid of falling down or complete repetitive duties like flipping burgers in a controlled surroundings. There’s no robotic or AI method that could wander into a weird kitchen and get ready a cup of coffee.

A man or woman who understands how coffee is created, on the other hand, could walk into just about any kitchen in the globe and figure out how to make a cup, offered all the vital elements were obtainable.

The route ahead, in accordance to Marcus, has to include additional than Deep Mastering. He advocates for a hybrid solution that combines symbolic reasoning and other cognition procedures with Deep Understanding to produce AI able of deep comprehension, as opposed to continuing to pour billions of dollars into squeezing far more compute or larger paramater offers into the similar aged neural network architectures.

For more data verify out “Rebooting AI” by Gary Marcus and Ernest Davis and study Marcus’ new editorial on The Gradient listed here.