[ad_1]

Did you know the TNW Conference has a track fully dedicated to exploring the latest work culture trends and the future of work this year? Check out the full program here.

The most exciting breakthroughs of the twenty-first century will not occur because of technology, but because of an expanding concept of what it means to be human. — John Naisbitt

Before we dive into why more women should lead AI teams, I want to share a fascinating story I heard from Tania Biland, a 3rd-year student of Lucerne University of Applied Sciences and Arts.

The story as narrated by Tania:

Last semester, our class got split into three different groups in order to develop a safety technology solution for Swiss or German brands:

Group 1: Only women (my group)

Group 2: Only men

Group 3: Four women and one man

After 4 weeks of work, each team had to present their work.

Group 1, composed of only women, developed a safety solution for women in the dark. As the jury was only male we decided to tell a story using a persona, music, and videos in order to make them feel what women are experiencing on a daily basis. We also put emphasis on the fact that everyone has a mother, sister, or wife in their life and that they probably don’t want her/them to suffer. In the end, our solution was rather simple, technologically: using light to provide safety but connected to the audience emotionally.

Group 2, mostly composed of men, presented a more high-tech solution using AI, GPS, and video conferences. They based their arguments on facts and numbers and pointed out their competitive advantages.

In Group 3, with 4 women and 1 man, the outcome didn’t seem finished. The only man in the group could not agree to be led by women and they, therefore, spend too much time discussing group dynamics instead of working.

The groups not only had different outputs but also approached the problem differently. My group (group 1) decided to start by defining each other’s work preferences and styles in order to distribute some responsibilities and keeping a hierarchy as flat as possible.

On the other hand, the two other groups elected a leader for the team. It turned out that these “leaders” were more perceived as dictators, which lead to heavy conflicts where the teams spent hours discussing and arguing while our group was just working and productive.

What science tells us about gender differences

The science landscape with regards to gender differences and effects on behavior is still evolving and has not come up with a clear set of scientific explanations for different behaviors yet. By compiling most of the research, there are two main factors that influence behaviors:

- Potential physiological differences between men and women

- Social norms and pressures forming different behaviors

In the above story, as told by Tania, women developed the solution in a Collaborative Leadership Style (adhocracy culture), adapting the leading position based on the tasks with an almost flat hierarchy. They derived their argumentation by involving all stakeholders (in this case the mothers and wives = users), showing empathy for their problems. They saw the bigger picture and also built a simpler solution that was actually finished.

Through the story, I was able to connect the dots on why most AI projects never end up moving out from the prototype phase to a real-world application.

Why AI products are not adopted?

Based on my experience, there are three main reasons why most AI and Machine Learning (ML) solutions do not move from the prototyping phase to the real-world:

- Lack of trust: One of the biggest difficulty for AI or ML products is lack of trust. Millions of dollars have been spent on prototyping but with very little success in the real-world launches. Essentially, one of the most fundamental values of doing business and providing value to customers is trust, and Artificial Intelligence is the most-heavily debated technology when it comes to ethical concerns and related trust issues. Trust comes from involving different options and parties in the entire development phase, which is not done in the prototype phase.

- The complexity of a launch: Building a prototype is easy, but there are tens of other external entities that need to be considered when moving into the real world. Besides technical challenges, there are other areas of focus that need to be integrated with the prototyping (such as marketing, design, and sales).

- AI products often do not take into account all stakeholders: I heard the story that Alexa and Google Home are being used by men to lock out their spouses in instances of domestic violence. They are turning up the music really loud, or they are locking them out of their homes. It is possible that in an environment with mostly male engineers building these products, no one is thinking about these kinds of scenarios. Additionally, there are many instances about how artificial intelligence and data sensors can be biased, sexist and racist [1].

Interestingly, none of the three points relate to the technical challenges, and all of them can be overcome by creating the right team.

How to make AI more successfully adopted?

In order to solve the above challenges and build more successful AI products, we need to focus on a more collaborative and community-driven approach.

This takes into account opinions from different stakeholders, especially those who are under-represented. Below are steps to achieve that:

Step 1. Involve different groups esp. women from the middle of the talent pyramid

In technology, most companies focus on hiring people at the top of the talent pyramid, where for primarily historical reasons, are fewer women. For example, most Computer Science classes have less than 10 percent of women. However, many talented women are hidden in the middle of the pyramid, educating themselves through online courses but lack opportunities and encouragement.

To give an example, I was talking with the president of Geek Girls Carrot, which is an organization promoting women in tech. They are organizing an AI workshop where over 125 women applied but they had only 25 seats, so naturally, they have to leave behind more than 100 talented women.

Imagine, if we can involve most of the other 100 women instead of only at the top. This would give a lot more women the opportunity to work in new technologies like AI.

Step 2. Build a communal and collaborative bottom-up team with different stakeholders

Next, we need more collaboration between men and women as well as different stakeholders to launch products successfully in the real market. This can be achieved through forming inclusive project communities that build AI products based on common values, beliefs, and often a bigger vision.

Proving the point, in the past six months, we brought together a group of more than 50 male and female students to build an ML model. Within a short time, members started collaborating and helping each other to build the models. Four subgroups got formed, and one of them was driven by two women and supported by two men (data taggers). The other groups were all men. In 4 months, the group with the two women and two male built the most accurate model. From the beginning, the women were much more willing to collaborate than men. However, more interestingly, I saw that men in the group also ended up behaving more collaboratively because of the other women in the group. This was fascinating!!

Step 3. Create the right Organizational Structure for collaboration

What if we could create organizational structures and practices that don’t need empowerment because, by design, everybody is powerful and no one powerless? I have seen that this can be achieved by connecting intrinsic and extrinsic motivations (which is not related to money) and creating an incentive structure that is not competitive.

In my case, I built the community where the mentor was at the top of the pyramid, followed by the community manager, then engineers working on building models and finally data taggers. Members from each team were striving to move up the ladder to reach the next level, which created an extrinsic motivation. However, the monetary compensation for people on the same level was the same. This fostered collaboration.

Why women should lead AI teams

In the story from the beginning, the female group followed a more Collaborative Leadership Style by showing more customer empathy and willingness to collaborate.

Considering the limited experiment in the solar project, we saw that the approach to use the community to build products helped as well to foster collaboration and build trust among community members.

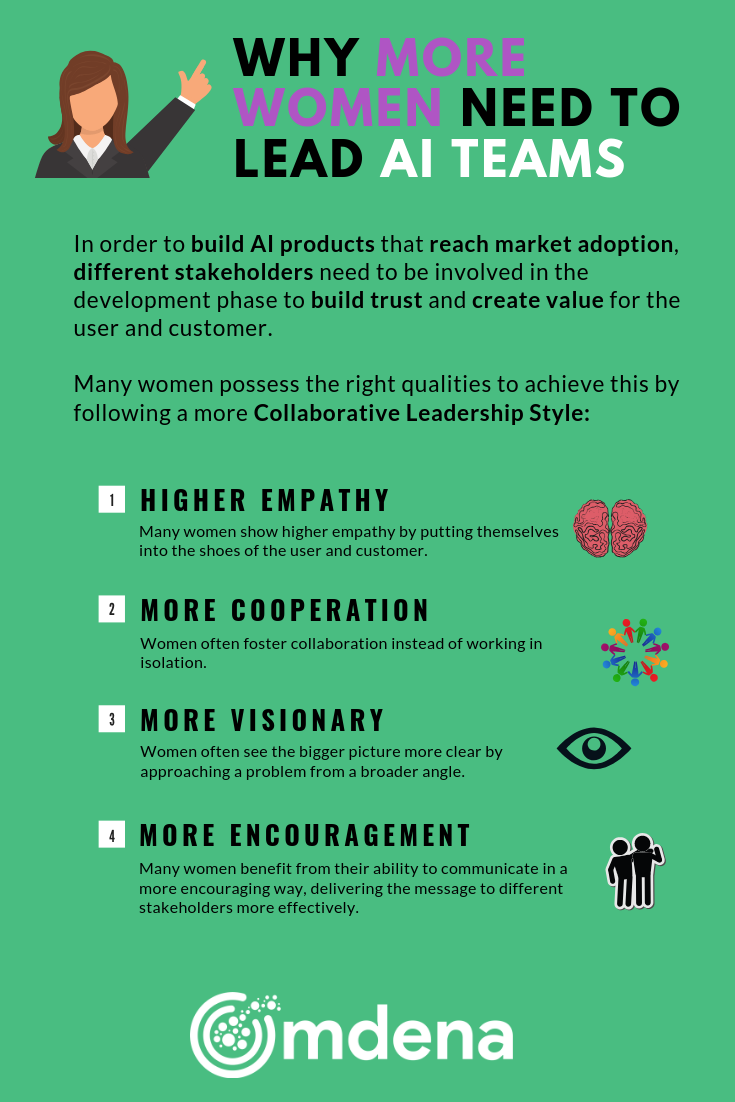

While none of the mentioned qualities can be generalized, the following graphic aims to summarize some of the reasons why many women are a great fit for Collaborative Leadership.

In conclusion, I am arguing that we should think more holistically and do our best to create the right environment where we look beyond gender, race, and cultural background and focus on how we can collaborate as humans to build a better future.

This article was originally published on Towards Data Science by Rudradeb Mitra. He started his career as an AI researcher and published 10 research papers. After graduating from University of Cambridge, he was part of building various startups in US, UK, Belgium, and Poland. His current focus is driving innovation bottom-up and solving various social problems around the world using AI through global collaboration of changemakers from over 75 countries. He also wrote a book on AI and have been invited to speak at over 100 events. Besides that he has no phone, meditates a couple of hours a day, and lives life with no goals in life and in a state of Wu wei.’